Our Visiontek R9 Fury X 4GB sample arrived with us just after the official AMD launch a short while ago. We decided to take more time and to retest all hardware with the latest AMD and Nvidia drivers – rather than rushing to get a review up as soon as possible with some comparison cards running on older driver revisions. Special thanks to Visiontek and (many) other AMD partners who offered us samples for this review.

The R9 Fury X sample is protected inside thick slabs of foam. This is a self enclosed liquid cooled unit, so additional protection is needed to prevent leaks due to rough handling during shipping.

Not an extensive bundle, but the DVI converter cable will likely prove handy for many people using an older screen without HDMI or DisplayPort connectors.

The Fury X 4GB card is very tiny, thanks to the HBM implementation. It measures around 190 millimeters long, although additional mounting space will be needed in the case to fit the 120mm radiator and fan. The R9 Nano – a future release, is said to be only 155mm long!

The radiator is compact and won't be difficult to install close to the card – although we can see this causing some issues for people. Many of our readers have a 120mm or 140mm ‘all in one' CPU cooler already fitted in the rear exhaust position, so this would need to moved out of the way for the R9 Fury X radiator to be fitted.

The R9 Fury X is nicely designed and we like the surface material, which feels great in the hand. It is a multi piece aluminum die cast construction with ‘black soft touch texture side plates'. The Black Nickel aluminum contrasts well with the other materials. There is talk of partners using their own custom faceplates in future.

The card takes power from two 8 pin PCI-e connectors. Above these two connectors is a row of LED lights which are either red or green in operation. A single green LED indicates that AMD ZeroCore is active. The more red LED's are showing, the more active the GPU is. The ‘RADEON' logo on the side also lights up in RED when the system is powered up.

AMD have ditched VGA and DVI connectivity on the rear I/O plate – opting for DisplayPort 1.2a and HDMI ports. We have no problems with this, especially as the card ships with a DVI converter cable, however there is one, rather niggling issue. AMD are still using HDMI 1.4a ports on their cards which are limited to 30hz at Ultra HD 4K.

If you want to game on a large Ultra HD 4K television it is likely it won't have a DisplayPort connector – only a small percentage do. You are therefore not able to get 60hz at the native resolution … and no one wants to play games at 30hz. Nvidia have had full HDMI 2.0 support now for quite some time. This may not be an issue for many, but we have already noticed enthusiast gamers complaining about AMD's lack of HDMI 2.0 support, on our Facebook page. I think this is a rather glaring oversight by AMD.

Getting access to the insides is rather easy and we can see the cooling system is created for AMD by Cooler Master. An all in one pump is in direct contact with the copper plate which covers the GPU core and the HBM memory underneath. Additionally there is a copper pipe and heatsink which covers the VRM components on the PCB. There is a dual BIOS switch, so it is easy enough to flash BIOS 2 and fall back to BIOS 1 in case of problems.

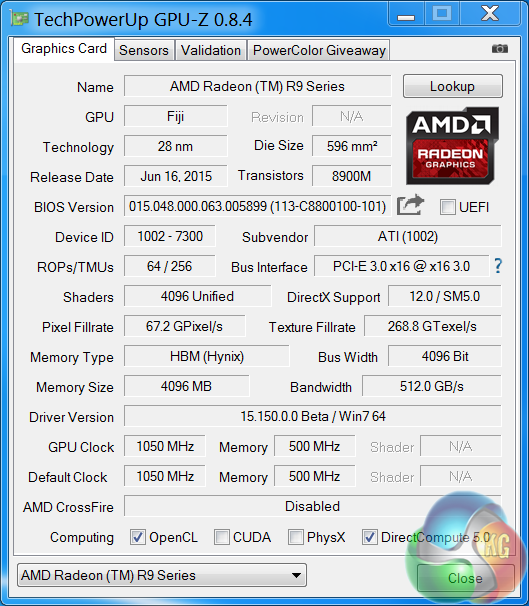

An overview of the Fury X in GPUz – as discussed in detail on the previous page of the review.

KitGuru KitGuru.net – Tech News | Hardware News | Hardware Reviews | IOS | Mobile | Gaming | Graphics Cards

KitGuru KitGuru.net – Tech News | Hardware News | Hardware Reviews | IOS | Mobile | Gaming | Graphics Cards

There is still a part of me thinks that wonders what if they had just used the regular memory instead of HBM would it have been cheaper ?

AMD have recently said that they are working on the coil whine as it has been noted it is a bit of an issue – if I find the article i read I’ll post the link.

thank you for a well written article with great information

What I learned, 980 Ti>Fury X> 980.

Can’t wait for dx12!

That way you would have ended up with another 290x. And we already have them plenty, don’t we?

The fact is Fury x is very exciting piece of engineering. I personally had two of them a week now, and I am yet to even begin to fiddle with everything they can offer 😉

I think if its price was cut to 600$ it would be a great option .. i believe AMD didn’t do that in the first place due to pride only

As always Kitguru thanks for another great review.

Fury seems to be hitting the 4GB limit and stuttering to unplayable levels, 4GB Fury just doesn’t have enough memory for AAA gaming now and it sure isn’t enough to be called a 4K gaming future proofing solution flagship card.

No 28nm GPU needed HBM1 bandwidth and its measly 4GB of VRAM, the HBM bandwidth is wasted and the 4GB of VRAM isn’t 4K gaming worthy.

Gamers are Much better off with a GTX980Ti especially a heavily-overclocked, custom-cooled GTX 980 Ti. from one of Nvidia’s many partners.

https://www.youtube.com/watch?t=226&v=8hnuj1OZAJs

That was a very good read, I will always have a soft spot for AMD but the R9 Fury X try as it might just doesn’t quite live up to the hype or expectations. With Nvidia pretty much showing their full hand in march, It’s nothing short of disappointing to see the Performance crown remain with Nvidia 3 months down the line despite AMD’s best offering. If you still gaming at 1080p and 1440p there really is only one winner here.

Still going to stick with AMD not going to give my money to a company that has to pay off game devs to make their cards look better.

Fury is essentially a double-sized Tonga chip with a tweak (hence the HDMI 1.4a and DP 1.2a). I think using regular GDDR5 it wouldn’t be fast enough to compete

LOL, AMD paid millions to developers to use mantle and make their cards look better.

You’re a AMDuped hypocrite who doesn’t know what the hell he’s talking about.

At 4K AMD fury x CF won the prize! left Nvda Titan x SLI in the dust read the new reviews from Digitalstorm.com and teaktown.com.

How many games use Mantle compared to Nvidia Gameworks again?

Mantle is open. Game works is closed.

Stupid question AMD hypocrite, go buy a watt sucking AMD Rebrandeon and figure out why your question is stupid.

Not a VRAM problem when other cards with 4GB are not stuttering at 4k.

http://www.pcper.com/reviews/Graphics-Cards/AMD-Radeon-R9-Fury-X-4GB-Review-Fiji-Finally-Tested/Grand-Theft-Auto-V

And apparently nvidia are running lower IQ settings, at least in BF4.

http://forums.overclockers.co.uk/showthread.php?t=18679713

Who you trying to fool AMD tool.

Mantle was a bug infested beta AMD API and never open.

Game works are developmental tools that Nvidia invested in and owns why should they share it with AMD, nobody is stopping lazy AMD from making their own.

Dx12 = mantle. AMD is not lazy. The intention is Mantle to be open sourced.

great review, well worth the wait – too many fud reviews up for this card on launch day. Always trust KitGuru to be honest. Thanks – JS.

A great read this morning thanks Allan. I love the methodology and all the tests are on the same graphics driver. A few of the reviews on launch day had poor test methods with GPU’s using 3 or 4 different drivers ! and a few like TTL seemed just like a pat on the back for AMD with many contradictions throughout rather than a proper review – quite easy to spot. Always read KitGuru for honesty and this just shows you cut through the crap to get to the facts. Well done.

They already have a v2 watercooled version out there in the wild. You can compare them by the sticker they have. The v1 cooler is the one used here and the v2 is a silver/chromatic logo.

Cheers!

Have a link with that statement? Because anyone could say that nVidia has paid even more since the times of TWIMTBP titles vs GameEvolved from AMD.

No matter how you slice it, nVidia has burned more dough on “”””””helping”””””” developers than AMD.

Also, we love blanket statements, don’t we? 🙂

Cheers!

Can’t compare other cards with HBM1, apparently Fury isn’t able to use all its 4GB of VRAM and becomes a Stuttering mess. Watch the videos Fury is a FUBAR Flagship that needed more VRAM.

Maybe dx12 will utilize HBM a lot better. I struggle to believe AMD have released a useless card lol. Maybe they have who knows. I am waiting for the strix 980 ti sli. I think AMD are no longer the best option for low prices, it seems NVIDIA have taken that crown along with the best performers. It seems AMD is a pointless option for CPU and GPU

That what AMD Fanatics say, that’s NOT what MS says. The intention of AMD GCN only mantle was to gain an advantage for their watt sucking ReBrandeons before DX12 which will float all GPU boats. After wasting millions on over pumped mantle debt laden AMD dumped their mantle for NOTHING, ZERO, ZILCH, NIX, NIL.

maybe

So you really have no argument I see glad to know.

“racistmalaysian 18 hours ago

Dx12 = mantle. AMD is not lazy. The intention is Mantle to be open sourced.”

your assumptions are out of date and wrong unfortunately , however you can write patches and submit them for upstream Vulkan inclusion….

care of Ryan Smith on July 2, 2015

http://www.anandtech.com/show/9390/the-amd-radeon-r9-fury-x-review/12

“….I wanted to quickly touch upon the state of Mantle now that AMD has given us a bit more insight into what’s going on.

With the Vulkan project having inherited and extended Mantle, Mantle’s external development is at an end for AMD. AMD has already told us in the past that they are essentially taking it back inside, and will be using it as a platform for testing future API developments.

Externally then AMD has now thrown all of their weight behind Vulkan and DirectX 12, telling developers that future games should use those APIs and not Mantle.

In the meantime there is the question of what happens to existing Mantle games. So far there are about half a dozen games that support the API, and for these games Mantle is the only low-level API available to them. Should Mantle disappear, then these games would no longer be able to render at such a low-level.

The situation then is that in discussing the performance results of the R9 Fury X with Mantle, AMD has confirmed that while they are not outright dropping Mantle support, they have ceased all further Mantle optimization.

Of particular note, the Mantle driver has not been optimized at all for GCN 1.2, which includes not just R9 Fury X, but R9 285, R9 380, and the Carrizo APU as well.

Mantle titles will probably still work on these products – and for the record we can’t get Civilization: Beyond Earth to play nicely with the R9 285 via Mantle – but performance is another matter.

Mantle is essentially deprecated at this point, and while AMD isn’t going out of their way to break backwards compatibility they aren’t going to put resources into helping it either. The experiment that is Mantle has come to an end…..”

KitGURU please make ********** WIN 10 benchmarks!!! Worst review ever. So useless.

Sure.

great review – are you blind?!

They did benchmarks on win 7!.. who cares about windows 7…. so useless. The only review where I see Fury X behind GTX 980Ti in wicther 3 and metro

Do you even use win 7?!

Win7 tells you everything… who use win7 for gaming?

Why so bad 1440P – how can DX11 on win7 feed 4096GCN cores? It cant… just please remake your bad review on win10.

DX12 won’t do anything extra. All it’s meant to do is give deeper access levels and remove a lot of “going through the CPU” for programs. DX12 is not some kind of magical “fix all” thing. All it is going to do is reduce CPU load and INCREASE GPU LOAD (temps, TDP, etc as well) for games that will be coded in is. THAT’S IT. Maybe there’ll be some extra tech, but aside from that… nothing.

✣☯✤✥☯❋ . if you, thought Gloria `s posting on kitguru …♛♛♛♛ ———Continue Reading

Now I see why AMD didn’t give you a sample. Garbage review. Only 5 games benched? Not a single win for Fury X? Yeah, whatever.

More fun with this site kitguru … Keep Reading

12 year old kids on the internet. Ugh.