We have built a system inside a Lian Li chassis with no case fans and have used a fanless cooler on our CPU. The motherboard is also passively cooled. This gives us a build with almost completely passive cooling and it means we can measure noise of just the graphics card inside the system when we run looped 3dMark tests.

We measure from a distance of around 1 meter from the closed chassis and 4 foot from the ground to mirror a real world situation. Ambient noise in the room measures close to the limits of our sound meter at 28dBa. Why do this? Well this means we can eliminate secondary noise pollution in the test room and concentrate on only the video card. It also brings us slightly closer to industry standards, such as DIN 45635.

KitGuru noise guide

10dBA – Normal Breathing/Rustling Leaves

20-25dBA – Whisper

30dBA – High Quality Computer fan

40dBA – A Bubbling Brook, or a Refrigerator

50dBA – Normal Conversation

60dBA – Laughter

70dBA – Vacuum Cleaner or Hairdryer

80dBA – City Traffic or a Garbage Disposal

90dBA – Motorcycle or Lawnmower

100dBA – MP3 player at maximum output

110dBA – Orchestra

120dBA – Front row rock concert/Jet Engine

130dBA – Threshold of Pain

140dBA – Military Jet takeoff/Gunshot (close range)

160dBA – Instant Perforation of eardrum

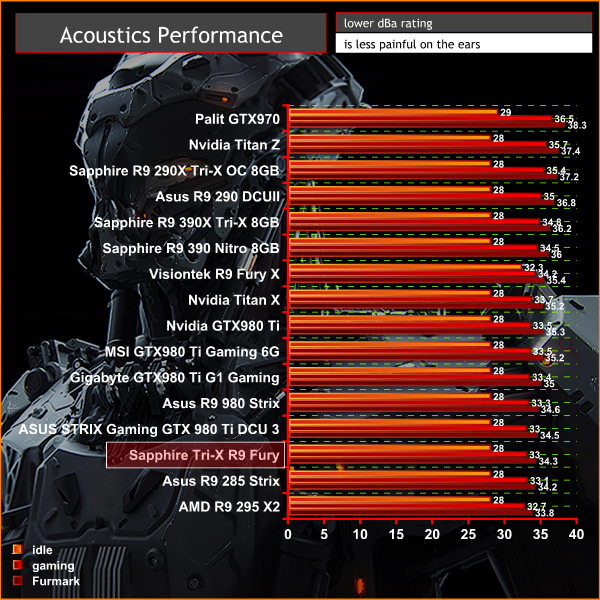

The Sapphire Tri-X R9 Fury is an exceptionally quiet graphics card. Under idle or low load conditions the fans disable completely and the card is silent (our equipment is limited to an accurate minimum reading of 28dBa). Under load the card measures 33 dBa which is very quiet indeed. A single case fan would likely drown out the fans on the Sapphire card completely.

Due to coil whine and pump noises associated with our Rev 1 sample of Fury X, the Sapphire Tri-X R9 Fury was noticeably quieter than the liquid cooled, higher cost model. We have not been able to get our hands on a Rev 2 sample of Fury X yet to compare, but we will in due time.

We tested the Sapphire Tri-X R9 Fury 4GB for coil whine by running some intense stress tests and games at over 500 frames per second. We noticed a little, but it is barely noticeable.

KitGuru KitGuru.net – Tech News | Hardware News | Hardware Reviews | IOS | Mobile | Gaming | Graphics Cards

KitGuru KitGuru.net – Tech News | Hardware News | Hardware Reviews | IOS | Mobile | Gaming | Graphics Cards

You Guys shuld do the test with the new drivers. But its a great review. Thanks

“but based on research and what I have read on our own Facebook page,

this lack of HDMI 2.0 connectivity is a real bugbear for gamers.”

Let’s be honest – what percentage of gamers are actually using 4k televisions that only have HDMI 2.0 inputs? Is it even 1%?

What percentage of the gamers making noise about Fury’s lack of HDMI 2.0 are actually using 4k televisions that only have HDMI 2.0 inputs? More to the point, what percentage of the people complaining about it aren’t ever going to own a 4k television that only has HDMI 2.0 inputs, and are just complaining because they don’t like AMD and want something to hang their complaints on?

The number of people using 4k HDMI 2.0-only televisions for their monitors who are also building their own HTPC and will be disappointed by Fury’s lack of HDMI 2.0 is so small that I’m pretty sure AMD doesn’t care – they have a much bigger market to focus on. By the time 4k HDMI 2.0-only monitors become mainstream enough that it’s necessary (assuming that ever happens), Fury will be LONG obsolete.

Besides, HDMI is on its way out anyway.

Some DP to HDMI 2.0 adapters are going to hit the market soon, so no problem at all.

And if you buy a 600$ card.. i think you can afford a 10$ adapter 😉

I absolutely agree. But the people complaining about Fury’s lack of HDMI 2.0 will NEVER hear that.

It’s about future proofing and also it’s kinda weird to leave it out

Very nice card, but the Sapphire wording is upside down!

Also, more and more people will be using a TV as 4K gaming is pointless on a 28″ screen, it’s suited to a big screen experience

“future proofing” isn’t an issue because by the time there is a sizable enough proportion of gamers using 4k HDMI 2.0-only televisions for their gaming that it becomes necessary, Fury will be obsolete. I don’t have the numbers handy but I’m willing to bet a nice shiny quarter that today, and by a year from now, the number of people gaming on 4k HDMI 2.0-only televisions is and will be still less than 1% of gamers.

I’d also be willing to bet that of the people making noise about the lack of HDMI 2.0 on Fury, 85% of them (or more) do not own a 4k HDMI 2.0 television and will not own one in the next year or two – they’re complaining because they want to complain.

There are 4k tv with DP, so if we speak for future.. you can purchase a tv with dp 1.3 port.

But big problem of tv play is ghosting, many see it on fast gaming lcd, on tv panel will be way worst.

Not my problem… got a 28″ 1440p… i don’t really need a 4k tv, i don’t even use the one in my house…

Its probably a fairly small percentage, although we have seen quite a few people complain about a lack of hdmi 2.0 support on our facebook page. I own a large sony 4k tv myself and i cant really use any amd cards to game on it otherwise its 30hz. Most of the 4k tvs in the uk seem to be primarily hdmi only, although ive seen some panasonic with a single displayport, although i dont think some of their models have yet to get netflix 4k certification.

Sure its a small percentage, but i fail to see why amd cant add an hdmi 2.0 port, most devices you connect to a television have hdmi connectors (consoles, sky and virgin media boxes, blu ray players etc) so it seems unlikely it will ‘die overnight’ to be replaced by displayport. Nvidia have hdmi 2.0 support so it would be good for amd to add it.

It’ll look the right way once its plugged into your motherboard facing outwards. 🙂

why didn’t they test it , benchmark it with Pcars.. oh wait…

We did use the 15.7 beta drivers for fury and fury x. We didnt have time to test all amd hardware again as the 15.7 beta was only given to us a few days ago.

Ah good point, I’m using a bitfenix prodigy, so wouldn’t work in such a case!

15.7 beta? Don’t they have a WHQL version of it already?

why do you increase the power so much but not core voltage?

correct me if im wrong but i thought the steps where..

1. take the core as high as you can on default

2. increase power limit and see if there is more from the core

3. well your going to want to make sure there is a good handle on temps and stability at this point

4. increase core voltage and clock.. maybe need another power increase so yeah it gets a little more advanced and a understanding of what is going to tell you if your voltage,power, or just at the limit of the chip.

Unless you’re referring to a different driver, the 15.7’s aren’t beta, they’re WHQL. I think that’s where the confusion is coming from.

The suggestion I’m making is that a large proportion of the quite-a-few-people complaining about a lack of HDMI 2.0 support on your Facebook page are people who wouldn’t ever buy an AMD card even if it DID have HDMI 2.0 support. They just want another reason to complain about AMD.

Everybody who decide a purchase should consider the image quality as a factor.

There is a hard evidence in the following link, showing how inferior is the image of Titan X compared to Fury X.

the somehow blurry image of Titan X, is lacking some details, like the smoke of the fire.

If the Titan X is unable to show the full details, one can guess what other Nvidia cards are lacking.

I hope such issues to be investigated fully by reputed HW sites, for the sake of fair comparison, and to help consumers in their investments.

AMD is going to be selling DP to HDMI 2.0 adapters.

-Part-time working I Saw at the draft which said $19958@mk7

bg…

http://www.GlobalCareersDevelopersCrowd/lifetime/work...

The driver we used was the driver amd sent us directly three days before this review was published and as the gpuz screenshot shows it says 15.7 beta. The whql driver looks identical and was released around the same time this review was published (maybe a little earlier, but certainly not time enough to test both fury cards with).

We tried everything, the screenshot just shows one variable. Once our card hit 1100mhz there was nothing left regardless of settings.

Yes they do now, but three days before the review was published that is the driver amd sent us directly. Its the same driver anyway.

i’d like to see some Fury X and Fury crossfire tests from you guys. i know there are benchmarks out there but i want to see you guys do it.

Is there likely to be a re-review of the top graphics cards upon the DX12 release? It’d be interesting to see how the new hardware fairs with the new software

Why are most of the people always complaining about that lack of HDMI 2.0 on the Fury (x)? I mean there are tons of DisplayPort to HDMI cables on the market nowadays… It is not like people won’t buy this card because of that.

< ??????.+ zeldadungeon+ *********<-Part-time working I Saw at the draft which said $19958@mk11 < Now Go Read More

<???????????????????????????????

1

Because they need a reason to complain about (and bash) an AMD product.

99% of the people complaining about HDMI 2.0 would never buy an AMD card anyway but they’ll spend hours of their day finding a reason to complain about it, and then complaining about it.

Win10 preview already has DX12 running on it and it is a free download. You can test it yourself.

Go with the help of kitgur’u… My Uncle Mason recently got a stunning red Jeep Grand Cherokee SRT by working part-time off of a pc.

You can try here ⇢⇢⇢ Start Job Here

more likely Nvidia fans

i just bought a Sapphire R9 Fury X 4GB HBM AIO Liquid Cooled GPU $389.99 @ newegg.com, Wed. Sept. 14th, for the sapphire AMD reference Design board & CM AIO H2O cooler, and i could give two shits of having HDMI 2.0, as i only have either a 24″ Benq LED Monitor with only DVI-D & VGA, or a 1080p Sanyo 42″ Inch HDTV with HDMI 1.1/1.2 or whatever, its a 5 yr old HDTV 1080p max, but using VSR…

(AMD’s Virtual Super Resolution) in the Display tab of radeon settings, i can set my games to 3200×1800 resolution, almost 4k, might as well be considered 4k, it might as well be…. and looks no different to me than my friends PC with Nvidia GTX 1070 6GB GPU with HDMI 2.0 @ 4K 3200×1800….

YUP, i call them nShitia GPUs, cuz nvidia cheats in everything… i used to be an nvidia fanatic, till they destroyed 3Dfx.. fuckin’ nshita basterds..

AMD needs to dominate the GPU & CPU MARKETS….

so COMPETITION can RISE / and PRICES DROP / Drastically, on both, Nvidia and Intel hardware… example…

AM4 Zen 8c/16 Thread cpu, theoretically at $299-$399? intel equivalent $600-$2000..

(ME)Um… no thanks intel, im with AMD On this one.. sorry, your too expensive, is there still 24k gold in your CPUS intel?…(INTEL) NO? (ME)DAMN…

i wish assholes would just stop dissing AMD, one day they will dominate, and AMD haters out there will cry?? over the price they paid for their cards…….

damn

https://www.techpowerup.com/gpuz/details/844m9

I must admit I went with a GTX970 with the intention that when I gather a little more money, to go to a better card still supported by my CPU. But what I notice almost directly is that although their shaders are faster, they give me poor image and lightning quality compared to my Old HD6950 2GB wich is remarkable considering my old card is a lot older then this new GTX970. For some reason the market makes AMD look weak in theory while in practice AMD cards outshine the market. I got lured in with commercials and Nvidia lovers claiming Nvidia was a lot better… but now I get the feeling that those people work for Nvidia and get money for luring consumers in their trap.