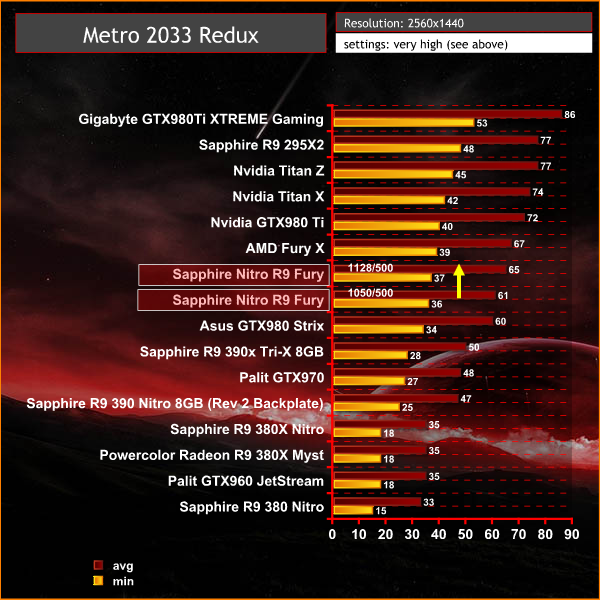

Metro 2033 Redux is a remake of the original game, with enhanced graphics. We often test with Metro Last Light Redux, but decided to mix things around for this review today. We test at 2560×1440 with the following settings: Quality-Very High, SSAA-off, Texture Filtering-16x, Motion Blur-Normal, Tessellation-Normal, Advanced Physx-off.

At 1440p the Fury averages around 60 frames per second with an increase of 4-5 fps when manually overclocked.

Tags AMD Fury review ATI Fury review Review Sapphire Nitro R9 Fury OC Sapphire Nitro R9 Fury OC 4GB

Check Also

The RTX 5060 will reportedly launch in May

The RTX 5060 is set to launch in May according to new reports. Nvidia announced the entry-level graphics card previously, but it has yet to announce a retail availability date.

KitGuru KitGuru.net – Tech News | Hardware News | Hardware Reviews | IOS | Mobile | Gaming | Graphics Cards

KitGuru KitGuru.net – Tech News | Hardware News | Hardware Reviews | IOS | Mobile | Gaming | Graphics Cards

The utter power of the AiB 980Ti’s still stuns me to this day! nVidia did a stunning job on their final 28nm flagship imo…

With a price drop this card will be a damn good deal. Not as powerful as a 980ti, but cheaper.

I will agree, but i like what amd are doing too, i think both companies are releasing good products, although a lot of problems exist with both, they are finally making major investment,

there are 8gb versions in the pipeline, so crossfire those, and you have a sub £1k monster, able to hit 4k rez at 60fps.

Can you cite your source for that?

I’m not trying to be argumentative, I’d just like to read where you hear that there are 8GB Fury cards coming, as I’m under the impression that the Fiji memory controllers and the physical structure of HBM1 together impose a 4GB hard limit.

Heyyo, tbh sounds more likely that he is talking about the Fury X2 which is 8GB total… But that’s split between GPU Cores, so it’s 4GB per core. The only time it will act as a 8GB solution is games that will take advantage of explicit multi-adapter… From how hard it seems to implement according to Oxide Games and their use of it in Ashes of the Singularity? Odds are it will be a rare occurrence… So I wouldn’t bank on that 8GB of total VRAM.

Heyyo, WHOA! Dat Ashes of the Singularity though! Super impressive that it ties the Fury X and GTX 980 Ti reference card… Now I just wish there was Fable Legends also to benchmark… Hmm I wonder why Microsoft never released that benchmark to the public??? Sad face I am making…

Good enough that I bought one. EVGA does a super job with their factory overclocked cards that are only $10 more for a 15% o/c. Cool, quiet, fast, reliable, with excellent drivers. What more could I ask for?

AMD gave a great effort with the Fury X/Fury line but it really didn’t help their business all that much. AMD took a major loss when the GTX980Ti was released at a price level $150 less than what AMD was going to charge for the Fury X. AMD had to match the price and the AiB were furious and did not make many cards available. Between that, the hassle of a water cooler, and the coil and pump whine for about the same benchmarks as what NVidia was offering made folks shun the Fury and embrace the 980Ti. AMD had a real chance here too.

The GTXs suck with async compute. If you test AoS with AA (async compute) at 4k, you will understand why Nvidia asked reviewers not to use AA in benchmarks. Check out the Titan X and the r9 390 scores at 4k, crazy settings and Msaa x4. Same settings except the Titan X uses a newer version of AoS, the R9 uses an older version of AoS.

https://www.youtube.com/watch?v=JS0rhMYmehE

https://www.youtube.com/watch?v=PqPj4N9H77A

Hi there,

I have the Nitro card but somehow I cannot access the OC+ Bios, help!

hbm 2 fury

Heyyo, that information isn’t correct dude. It’s not that NVIDIA “sucks” at async compute… it’s apparently not implemented yet despite what their drivers looked like. http://www.overclock.net/t/1569897/various-ashes-of-the-singularity-dx12-benchmarks/2130#post_24379702

The situation hasn’t changed yet either. NVIDIA still don’t have an async compute driver out and so far it’s unclear if it’ll mean native async compute performance or something similar to dynamic recompiling as we saw with DirectX 9 and Shader Code 24bit to 16bit (NVIDIA’s FX 5000 series at the time didn’t support 24bit natively, only 16bit, thus lost rendering time dynamically recompiling and lower framerates) which was evident when Half-Life 2 released. You can find an article about it if you Google Maximum PC shader code NVIDIA. The following generation of cards? Yes, the NVIDIA Geforce 6 series did natively support shader code 24bit.

With that said? I wouldn’t buy a Maxwell GPU as odds are since nothing has come forward yet about async compute working on Maxwell? It’s not worth the risk to get it. NVIDIA Pascal might have native async compute support… but same thing, I wouldn’t buy one until there was definitive proof from multiple review sources. Same idea as the beginning of the Dx9 era… I wouldn’t risk it unless I knew for certain it was working.

http://www.3dmark.com/fs/7490341 I7-6700K 4.6Ghz Kraken X61 DDR4 16GB 4400 Mhz XFX Fury x 1080 Mhz Sabertooth mark 1 z170

http://www.3dmark.com/sd/3810052

AMD’s expensive Fury is Not a good deal when compared with the many factory OCed versions of the performance per watt champion Maxwell GTX980Ti. HBM1’s bandwidth is wasted on 28nm GPUs, and with only 4GB it’s a 4K lame game gimped.

VERDICT: NO thank you AMD, I recommend a factory OCed GTX980Ti which is by far the biggest bang for your GPU buck.

It is mentioned in the reviews, but no one says what position is what:

1. the default BIOS of TDP 260w and a target T of 75C,

2. the second BIOS of TDP 300w and a target T of 80C!

Namely, light on is what (pressed), light off (unpressed) is what??

In my testing in both positions temperature under stress stopped at exactly 79C?? And that left me puzzled in the dark…?

Those aren’t launching until some time 2017. The landscape will most likely look completely different by then.

That score is a bit low, easily deserves a 9 imho. Fantastic cooler and performances, at the right price would’ve been a 9.5-10.