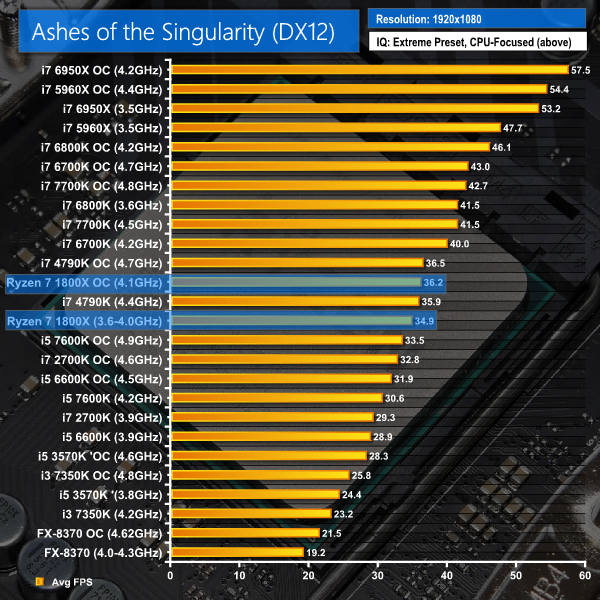

Ashes of the Singularity

Ashes of the Singularity is a Sci-Fi real-time strategy game built for the PC platform. The game includes a built-in benchmark tool and was one of the first available DirectX 12 benchmarks. We run the CPU-focused benchmark using DirectX 12, a 1080p resolution and the Extreme quality preset.

This result is surprising. Ashes of the Singularity is a very well multi-threaded benchmark, as indicated by the Haswell-E and Broadwell-E performance figures for Intel. All of Ryzen 7's threads were pinned to around 80% load when overclocked, which is a similar value to the 5960X at 4.4GHz.

Ashes is simply indicating that Ryzen 7 gaming performance, even when the engine is heavily multi-threaded, is not as strong as Intel on a core-for-core basis.

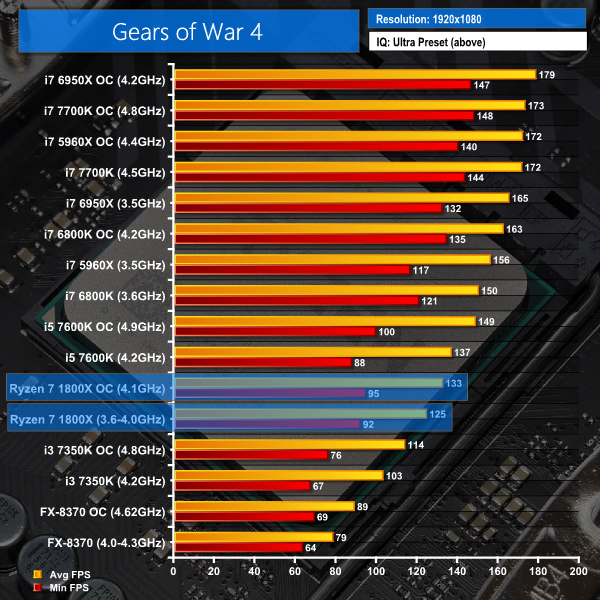

Gears of War 4

Gears of War 4 is a third-person shooter available on Xbox One and in the form of a well-optimised DX12-only PC port. We run the built-in benchmark using DirectX 12 (the only API supported), a 1080p resolution, the Ultra quality preset, and Async Compute enabled.

Note: The Core i7-2700K, i5-3570K, and i7-4790K are not shown in Gears of War 4 as the game download was too large to install on their system SSD and the clunky Windows Store platform gives errors when moving games installed on a secondary SSD between test systems.

Gears of War 4 and its DX12 operating mode place Ryzen 7 1800X performance within around 10-15% of Kaby Lake Core i5-7600K. The 1800X can push more than 120FPS average at both stock and overclocked speeds, while also keeping minimums above 90FPS.

In isolation, this is good gaming performance from the AMD chip but when compared to Intel's modern Core i7 processors, there a noticeable performance gap. On average, the £400 i7-6800K is 20% faster at stock and 23% faster when overclocked. Move the sights to the 5960X and Intel's $1000 chip is 25% faster than Ryzen 7 1800X at stock and 29% quicker when both are overclocked.

If Gears of War 4 is the type of game that you want to run at a high refresh rate, the Ryzen 7 1800X will do just that. However, the Broadwell-E and Skylake-based chips will do it better, keeping minimums and averages well above 1800X levels.

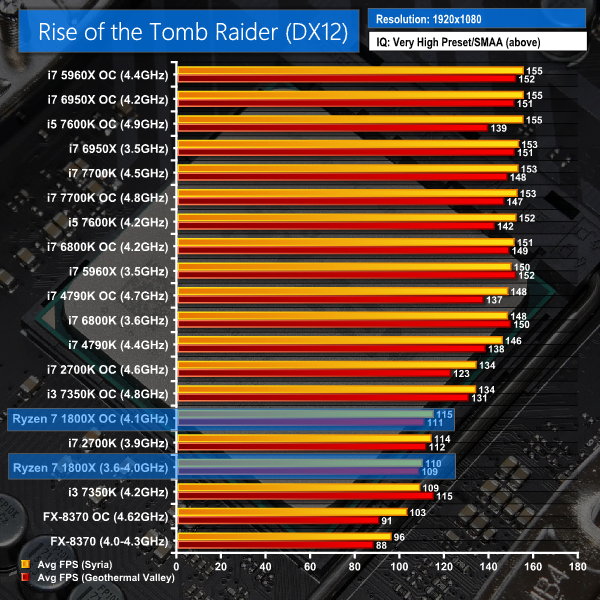

Rise of The Tomb Raider

Rise of The Tomb Raider is a popular title which features both DX11 and DX12 modes. Heavy loading can be placed on the CPU, especially in the Syria and Geothermal Valley sections of the built-in benchmark.

We run the built-in benchmark using the DirectX 12 mode, a 1080p resolution, the Very High quality preset, and SMAA enabled.

ROTTR in its DX12 mode is proficient at balancing load across Ryzen 7's 16 threads though it does like to pin a thread or two at very high loads (90%+). This loading was significantly higher than what was observed for the 8C16T 5960X and seemed to materialise as a limiting factor for game performance.

Ryzen 7 1800X is around Sandy Bridge i7 2700K performance in ROTTR DX12. The modern i5s and i7s based on Haswell architecture and newer are around 30% (or more) faster than Ryzen 7 on average. This is another disappointment, especially given that ROTTR is a DX12 title that should be able to leverage the high core count of Ryzen 7.

To put this in perspective, though, if you have a 60Hz or 75Hz monitor, Ryzen 7 will do the trick. If you game at 100Hz, Ryzen 7 1800X still looks to be a compelling option. But for 120Hz+ gamers, a modern i7 is a better choice for ROTTR.

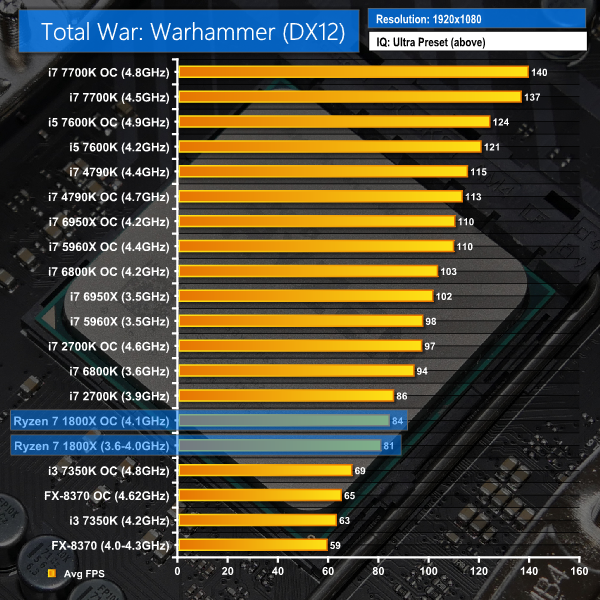

Total War: Warhammer

Total War: Warhammer is another title which features both DX11 and DX12 modes. Heavy loading can be placed on the CPU using the built-in benchmark. The DX12 mode is poorly optimised and tries to force data through a low number of CPU threads rather than balance operations across multiple cores. As such, this gives a good look at pure gaming performance of each CPU in titles that aren't well multi-threaded.

We run the built-in benchmark using the DirectX 12 mode, a 1080p resolution, and the Ultra quality preset.

Total War: Warhammer uses a poorly optimised DX12 mode that tries to force data through one or two threads, resulting in at least one thread being locked at 100% utilisation and dictating overall gaming performance. So, by being badly optimised, Total War proves its worth in our results by giving an indication of generalised gaming performance on games that do not effectively scale their load across multiple CPU threads.

Average performance for the Ryzen 7 1800X is slightly below that of a stock-clocked Sandy Bridge 2700K. Only the old FX-8370 performs worse in this game. If you have a desire to drive Total War: Warhammer at a high frame rate, you will be better served by a fast, Skylake-based chip.

DX12 Gaming Performance Overview:

Ryzen is a solid gaming CPU in our array of DX12 titles and it's certainly a vast upgrade over the FX-8370. However, Intel's modern i7 CPUs are faster than the 1800X and the high-frequency Kaby Lake i5 also beats the Ryzen 7 chip in 3 our of 4 tests.

You can play ROTTR and Gears of War 4 at or around 100 FPS with Ryzen 7 1800X and a Titan X Pascal at Very High or Ultra image quality settings. The Core i7 chips offer more performance headroom, though, so if you are a 120/144Hz+ gamer, they will be better choices than Ryzen 7. Provided you don't plan on conducting intensive background tasks, such as OBS streaming, that is.

KitGuru KitGuru.net – Tech News | Hardware News | Hardware Reviews | IOS | Mobile | Gaming | Graphics Cards

KitGuru KitGuru.net – Tech News | Hardware News | Hardware Reviews | IOS | Mobile | Gaming | Graphics Cards

Intel is dead.

AMD is back. Gonna grab 1700 with R9 Nano.

Interesting, although i admit to being disappointed with performance in the gaming arena. No faster than my overclocked 4790K. I’ll wait this one out for now or until Intel brings Kaby Lake priced down. the 7700K or 6800K may be a better buy with a price reduction…

It’s a very mediocre gaming chip, I’d be better off just moving up to a 4790K from my current 4690K on my Z97 rig rather going to all the hassle & expense of installing new CPU, Mobo & RAM.

Damn…

The Gaming performance is disappointing. :'(

Damn…

The Gaming performance is disappointing. :'(

Something just doesn’t seem right here. How can a chip score so well in benchmarks (both single and multi-core) and then do rather poorly in gaming performance? Is this an issue to do with the quality of how the games are programmed?

looks to me like a optimisation issue, Ryzen wasn’t out when those games were launched, also, the platform is very young etc, so looks to be something that will solve itself with updates to BIOS, drivers and windows, still don’t expect to see 1800x beating 7700k it will just more closely compete with the x99 6900k, 6950x etc. lower-end ryzen SKUs may have better single core performance with higher frequency (that’s a guess) translating into better gaming performance.

I mean DX12 should scale better with higher core counts shouldn’t it? but Ryzen looks to be doing even worse at DX12, that’s the main reason that I think there’s a lack of optimisation, not horsepower.

You are indeed, a kok.

From the wide array of tests I’ve seen in the last hour I wouldn’t recommend the 1800X for 1080p gamers unless they’re streaming or performing other CPU-intensive tasks. The 1800X is clearly perfectly competitive at 4k as you’re more GPU-bound, but at 1080p I think the 7700K is going to be better value for money, which is not something I thought I’d ever say about Intel. Intel have been on the top for too long. If game developers had had Ryzen for the last two-three years then AMD might be more competitive, but sadly they do fall behind in these gaming tests. Maybe the lower core models will be better options for gamers as theoretically the clock frequencies could be higher.

All those games will have been compiled using the Intel tool set, which will naturally favour Intel CPU’s.

Because AMD simply have not been competitive for the last few years in the CPU market, all the game development studios will be using Intel’s compilers, so it will hurt AMD gaming performance.

At least that’s what I suspect is going on.

That does sound about right because regardless of what benchmarks are used, across the board it hammers most CPUs, so to say that then translates into lower performance for games like Witcher 3 and GTA V does seem to be more of an optimisation issue than crap architecture.

Agreed.. 4790K + GTX 1070, I’m good for now.

Agreed. 100%..

But i’m sure AMD will beat Intel XD now is that AMD 1 step a head… 😀

Can you do some gaming benchmarks while doing other background tasks like streaming. I wanna see how well it does for my next streaming build.

LOL of course this is right, why do you think AMD didn’t let anyone release reviews until the chip was actually shipping?

Fucking called it. AMD is on par with i7 cores of roughly same price range. Lacking in gaming power, but with great encoding points.

Thanks, but no thanks. My $210 (USD) i5 6500 is well within tolerances of price per performance.

AMD didn’t fail to let my mediocre expectations down. Maybe the next iteration of Ryzen 7 chips might be interesting to look at. For now, I’d still rather go with Intel if this is all that AMD’s top of the line can offer.

6/10 would definitely buy a 7700k before the 1800X.

very early on for it to be fully optimized by their development team, but even as is not at all optimized compared to now old to very old highly optimized Intel flagship chips, I still think Ryzen is outstanding, need more reviews from many sites to make a valid opinion as everyone does testing differently for many reasons claiming it can only push around 60FPS in some of the listed games while others are reporting same games pushing north of 90-120. TitanX though powerful might be inducing some AMD hateon 😛 anyways we shall see how the landscape changes in a few weeks/months once things have been polished and AMD various partners have had a chance to tune and optimize as well as the other variants come around.

For AMD to even be within spitting distance with Ryzen of flagship or even midrange Intel dominated space speaks volumes of Ryzen being very well engineered, compared to previous offerings many years now that were at best another choice rather then truly competing with 🙂

This is something I want to look into though I’ll openly admit that I am not a game streamer and am therefore not proficient with operating (never mind testing with) OBS and the likes.

If you have suggestions for the sort of gaming+streaming tests you’d like to see, please get in touch using the contact form (http://www.kitguru.net/contact/) and we can discuss some test procedures via email :).

Luke

DX12 benefits AMD graphics but hinders AMD CPUs? For the purpose of “shifting the load to the GPU” and “distributing load into many threads” it seems that DX12 failed here, miserably. Absurd stuff that seems to have gone unnoticed. I’m glad you didn’t neglect this issue like other reviewers (sadly some of them are big-name).

You dont’ need 4K. All it takes is 1440P and the gaming performance gap closes to non existent in most games. Look at at the Guru3D review. All these reviews only showing 1080P with titan Xs are doing Ryzen a HGUE disservice. No one plays games like that unless they are playing competitive CSGO or something. For the VAST VAST majority Ryzen will offer the same gaming perforce as intel for practical puposes.

Only 4.1GHz at 1.44v damn, thats gonna suck for games that require that singlecore speed. My 7700K at 4GHz takes 1.03v by stock and runs great at -0.1v offset, resulting in 0.93v at 4GHz, and much much cooler.

These chips are great powerhouses but will suffer from that dreaded issue of single threaded performance for games that dont support multi threading.

Half tempted to switch… almost… maybe a few years down the line when my 5.3Ghz 7700K starts to struggle… if it ever struggles.

Learn to read – I wasn’t saying the results are a lie, but how it’s very bizarre that benchmarks put the 1800X at the top, whereas for games it’s at the bottom. It only makes sense if the games have been created focusing solely on Intel architecture.

Most games are ports from consoles, PS4 and Xbone run AMD hardware, so the guy’s assertion above that they are optimized for Intel is completely false.

So you recon the PC ports aren’t optimised for Intel? They’re just “ported”? Stick to KFC m8, outta ur depth here.

man, if it was a simple matter of bringing the game code to the PC don’t you think there would be simulators for the PS3, 4 and Xbox 360, one? That’s not this simple, the game IS INDEED optimised to run in PC otherwise the port would just be BAD remember that last Batman game? That game wasn’t optimised for PC that’s why it launched so badly on PC.

Also, again we have lots of things not yet optimised for ryzen, if you read other reviews like gamer nexus’s reviews you’ll see that turning SMT off gives a rather considerable performance increase (same thing happened when intel introduced hyper-treading) which means a future windows/BIOS update will remove this bottleneck and free that performance for us even with SMT ON plus other tweaks that certainly will come our way. Guru3D benchmarks are also interesting since they’ve tested 1440p as well

more amd hype another faliure games are 20 to 30% slower this is not good

AMD explains why Ryzen doesn’t seem to keep up in 1080p gaming

http://www.kitguru.net/components/cpu/matthew-wilson/amd-explains-why-ryzen-doesnt-seem-to-keep-up-in-1080p-gaming/

If AMD chips are exactly the same as Intel’s then I don’t see any fault in your statement. But the fact of the matter is, they are different. They may have similarities thanks to the cross licensing agreement but they’re both different architectures and will run differently from the other.

they do have a point though. 1080p is like the minimum resolution people play at now.

nonetheless, it’ll get fixed soon enough.

LOL I’m the one out of my depth? You’re the one that didn’t see this coming and you’re surprised grasping at straws as to why this CPU sucks. Just look at AMD’s history. Bulldozer optimized for integer and binary calcs and used clustered multi threading and got crushed on floating point, Excavator tried to fix that problem by using SMT instead of clustered multi threading but still failed miserably. Now we have a new CPU that guess what? Sucks at multi threading. Shocker that it works better with SMT disabled. LOL. You salty AMD fanboys are just hilarious. Hope you enjoy cinebench!

or maybe like, do encoding while gaming? just so we could see how it would perform under that kind of load.

in the Ryzen official launch, they had a demo called “Megatasking” or something like that where they would run multiple benchmarks at a time. though they’re all productivity benchmarks, we’d like to see some with gaming.

it seems to me on 1080p there is something issue maybe it can corrected with some software update.

in 2560×1440 resolution everything is alright.

Lol magic port button. Doesn’t exist. Go back to Wendy’s bruh.

Yes but they don’t do it with a 1080 or Titan liek the reviewers are using. They do it with 1060s,1050s,460s,470s,and 480s which again will make a GPU bottle neck. In any realistic use case for 99% of people it will be the GPU deciding the FPS not the CPU.

Someone is butt hurt because they don’t know wtf I just said

Says the burger flipper who thinks there’s a magic port button. Salty logic bruh.

LOL, you’re just salty AMD couldn’t come out with a decent CPU and your mom can only afford a Ryzen 1700 which is garbage. Good luck with your “optimizations” just like bulldozer right? HAHAHAHAHAHAHA

AMD said they’re working on Windows drivers that they hope to be released next month. It is said to improve gaming performance specifically.

The Nano is so sexy.

Facepalm.

I’ll probably wait a month or two before making my own assessment. By then, the hype surrounding Ryzen would have subsided. What I’m more interested to see is a compatibility test for Ryzen on Windows 7 seeing AMD isn’t keen on supporting it. Hoping for slim chance that Kitguru will do one soon.

The link gave me error 404 🙁 I would suggest doing a live stream using twitch of a game like For Honor or Battlefield 1 at 1080p/1440p. Do the same for Intel’s i5 and i7 kabylakes and then of course throw in a broadwell-E. We may also need to see dual screen setup of playing an HD youtube vid with 2-3 tabs for like tech sites on one screen while doing gaming/streaming on the second. Also try to clock all cpu’s to something like 4Ghz so we can see clock for clock performance along with stock clock comparisons. Thanks!

This is one of the better reviews I’ve seen… But all DO point to an optimization issue with AMDs SMT implementation… Some games show higher perf without SMT turned on…

But I will give up a few fps for the scientific and compiling level of work… I guess we’d need to see execution traces to tell where it’s bottlenecking… But it SEEMS like they are trying to load the second thread and it takes too long to return…

I watched a video of gaming which showed thread number two barely getting any work…

As close as it is and the way it performs at 4K, once they get the threading model right in game engines it may get another 10-20% of perf… This review makes it clear that there is plenty of CPU % left on the table…

solution: Offer the R7 1850x, clock it at 4ghz, and sell it at $375. Boom

Sorry, my 6800K, 4.1 GHz @1.199 V, 100% stable, how much voltage the destroyer 1800X has @ 4.1 GHz? Heat and power consumption do count, mind you.

lol pethetic! 4.1 max over clock? while you can get a skylake that is much cheaper now and go all the way to 4.5ghz easy

http://www.kitguru.net/contact/

When I noticed that EVERY review site only listed 1080p I thought to myself something was WRONG here. There is certainly something going on here most likely and I am glad other people are seeing it too.

I think Intel is playing dirty games with enthusiast data and offering incentives for unfavorable / mis-leading reviews. It is just a little too convenient that all the sites list only 1080p…

We know a ton of people using high refresh monitors, we have reviewed plenty of 120hz and 144hz panels,- so those ultra high frame rates are important to get the most from those screens. 1080p is still the most popular resolution in our polls. We even have a new 240hz panel from asus in our labs to test!

We also showed 4k resolution which is a good indication of gpu limiting. Its not like we downscored the processor for the 1080p gaming results – it won our highest award as we think this will improve over time. There is simply not enough time before these reviews to run muliple games at every resolution on many gpus. simply leaving out 1080p testing with a powerful gpu would be sloppy work for any professional review site, we are here to find problems with any product and report on them, not hide them behind limiting situations. Amd have even answered the issue publicly. http://www.kitguru.net/components/cpu/matthew-wilson/amd-explains-why-ryzen-doesnt-seem-to-keep-up-in-1080p-gaming/

1080p is still by far the most popular resolution for PC gamers: (http://store.steampowered.com/hwsurvey/?platform=pc). Granted it is unlikely that people using such high-end hardware will be gaming at 1080p but they can be using any number of resolutions – 1080 ultrawide, 1440p, 2560×1600, 1440 ultrawide, 4K UHD, 5K, triple screen. We simply cannot test all of those eventualities so sticking to the ‘industry standard’ 1080p resolution makes sense, while also adding in 4K to show performance in a GPU-limited gaming scenario.

The reason a Titan XP is used is because testing hardware is all about eliminating bottlenecks. We could have used an RX 480, or any other GPU from our stack, but that doesn’t do any good because the GPU will be limited before CPU limitations come into play. The Titan XP and fast RAM put the performance onus solely on the CPU so that we can observe its performance and analyse it. That’s what our testing allowed us to do and, as written in the review, if you don’t want to game at high refresh rates above 60FPS, Ryzen is a perfectly capable CPU. If you game at a resolution, or with a GPU, where you can’t even get anywhere close to more than 100FPS, Ryzen is a perfectly capable CPU. If you game at 120FPS+ high refresh rates, Ryzen can start to show some issues against Intel’s competitors. 1080P allows us to show that as GPU power is in such excess at the resolution and it’s down to the CPU to keep pace. This logic is the same across other resolutions if it is only enhanced GPU power required to operate at those higher resolutions (not like some open-world games where a higher resolution means more on-screen action and possibly more NPC or background physics calculations).

As we showed with 4K, Ryzen will keep pace in GPU-limited scenarios. That point scales – if your gaming resolution is highly GPU-limited and less than 100FPS, Ryzen looks good. If you GPU isn’t as fast as a Titan XP and you cannot get anywhere near 100FPS+, Ryzen looks good. 1440P strikes a middle ground between 1080P and 4K, but it won’t be entirely GPU-limited if you are pushing past 100FPS in some games. I use a 3440×1440 100Hz monitor myself and therefore made interpretations as to Ryzen’s performance for my own gaming PC. The 1080P result for GTA V (my favourite game) showed Ryzen hitting less than 100FPS average. At 1440P there will be higher GPU load which will reduce the performance difference between CPUs but what if you simply want to throw more GPU power at your gaming PC (not unreasonable when spending £1k on a high-end gaming monitor)? You are still going to be limited by the pace set by the Ryzen CPU in that case. If I want to play GTA V at 100Hz, the 1080P test data in this review showed that Ryzen is going to struggle more than some modern Intel competitors, even if I add more GPU power.

I want to do some more gaming-focussed testing over the coming weeks/months with Ryzen so that I can test more of these scenarios that gamers are curious about (1440P, more 4K, less GPU power, more GPU power through XFire/SLI, etc.). As it stands, this review contains more than four months worth of gathered, validated, and re-validated test data so adding 1440P resolution (as well as 4K which I specifically added for this review) would have simply been impossible in the given timescale.

TL;DR – thanks for the feedback. 1080p and a fast GPU are used to eliminate system and GPU-induced bottlenecks and put the performance onus on the CPU, not anything else. As we wrote in the review, Ryzen is a good gaming CPU is you stick to 60FPS. If, however, you want ultra-high refresh rates (whether at 1080p or 1440p with more GPU power), Ryzen does show performance differences against some modern Intel chips. This may, however, change with AMD’s suggestion of patches and optimisations: http://disq.us/url?url=http%3A%2F%2Fwww.kitguru.net%2Fcomponents%2Fcpu%2Fmatthew-wilson%2Famd-explains-why-ryzen-doesnt-seem-to-keep-up-in-1080p-gaming%2F%3A6KEii0Xu6xlH6zRzAf1Hzn5zThM&cuid=3103389

Look at the Steam survey data. That’s why 1080P is used. 4K data was added specifically for this review (which was very tough to do in the given time frame) to show the point of Ryzen keeping pace when you have GPU-limited performance. I have been working on nothing but gathering test and comparison data for this Ryzen review for more than four weeks. Adding 1440P performance with all of the comparison data would have not been possible in the allocated time. It can, however, be interpreted from the 1080P and 4K performance data.

If your 1440P monitor is 60Hz, Ryzen will be fine and your gaming performance will primarily be driven by the GPU. If your 1440P is ~100Hz and you have sufficient GPU horsepower to drive that refresh rate, Ryzen will largely be fine though some games like GTA V may still show minor performance differences to modern i7 CPUs. If your 1440P monitor is 120Hz and you have sufficient GPU horsepower to drive that refresh rate, Ryzen will be largely fine in the games we tested but some may show performance disadvantages against some Intel competitors (GTA V, ROTTR, Total War: Warhammer). If your 1440P monitor is 144Hz+ and you have sufficient GPU horsepower to drive that refresh rate, Ryzen will be fine in some games and will struggle against Intel in some others (add Gears of War 4 to that previous list). This can be interpreted from the 1080P performance where GPU horsepower is in excess and the focus is being put on the CPU performance. At 1440P, of course frame rates will be reduced due to higher GPU load, but what if you simply add another graphics card to once again put GPU power in excess? Then a similar pattern as the 1080P performance results will be shown, showing Ryzen to generally be perfectly good up until around 120Hz.

Nothing to do with Intel (seriously, how did Intel enter the conversation? We haven’t spoken to Intel or their PR agency since late 2016 and that was only to decline our i7-7700K sample from them. We haven’t worked with them since the i7-6950X launch in May 2016). There’s no need to try to look for tin-foil hat stories. I don’t care whether AMD is faster or whether Intel is faster. I simply want to find out who is faster in which scenarios and present those facts.

Great suggestions, thanks. I will try to look into these kinds of tests and usage scenarios and address them with some performance numbers for Ryzen vs Kaby Lake vs Intel HEDT in the future. There will be A LOT of data to gather with your suggestions but they are very good, so thanks for that and I’ll try my best to work with them.

Obviously with standard benchmark testing we try to minimise background tasks etc. in order to get accurate results for the test in question. However, we also understand that this isn’t how most people play games in reality hence why I tried to add comments relating to spare CPU cycles and how they will be useful, when analysing the gaming performance data in this review.

Thanks for the suggestion. The AMD Megatasking demo was Blender rendering, plus Handbrake video conversion, plus Octane web browsing at the same time. That was a good test scenario for showing multi-core advantages. Perhaps not realistic that people will be doing all that while gaming but I guess it’s also not unreasonable that you may fire up a game while waiting for Handbrake to convert the Bluray you are ripping onto your home media server or while your cool design is being rendered at high resolution.

As with the above suggestions, I’ll look into these usage scenarios over the coming weeks and try to get some test data for them. Thanks.

Voltages used for AMD chips aren’t really comparable with those used for Intel CPUs. For example, the 6950X at 1.275V (or 5960X at 1.30V) draws more power than Ryzen 7 1800X at 1.44V loaded.

Heat (thermal energy) is tied more closely to power consumption than temperatures (which are a factor of material properties used in the design of the heat exchange system). High power consumption will mean more heat (thermal energy) being pumped into your room.

Well, according to Tom Logan at OC3D, the 1800X OC consumes 108 w more than the the 6850K OC. By my book, It’s way too much.

Seen the news or too busy being salty as f? CUZ UR WRONG AH M8 WELL WRONG! #Saltynwrong

According to Tom Logan? You are not seriously using that guy as a yardstick for anything with any kind of credibility are you? Last time I read his site he was on his soap box about some brand being terrible – probably related to the fact they don’t advertise with him. If it was called the ASUS Ryzen 1800X it would have gotten top marks.

I skimmed his Ryzen review and to even compare it to some of the better ones, including Anandtech and KitGuru is a joke. His power figures look completely off based on people I trust, but its not a shock – he is too busy building his personality on youtube and facebook to actually test things properly.

I think its a great looking CPU, gaming performance is a bit off, but its still playable for me based on whats ive read – I wanted a new X99 system, but I hated paying £1000 for the CPU which has held me back so long. Just ordered the 1800X for £480 and I have to say im really excited. Dont know about you, but playing games is just a part of my day.

Jesus, another idiot with conspiracy theories who doesn’t even read the review properly. Once I saw ‘ONLY 1080p’ I had to hit my head on the wall. Comments from people on being paid by Intel when there is actually 4K testing in this review makes me smile. Doh.

Josh – if I saw KitGuru testing this CPU at 1080p with a 460 graphics card I wouldn’t be here – because I want a review of the CPU, not a video card – it is after all a Ryzen review, not a graphics card review, right?.

You want a CPU review but its actually not really a CPU review but its more ‘a CPU review being held back by the GPU’? yeah, thats idiotic. beyond idiotic. Always best to get a grip of what you are talking about in public before you attack others with retarded comments showing that you really are struggling to even figure out how this all works.

‘KitGuru you suck, you actually tested the CPU properly without GPU limiting and other factors influencing the actual results for the CPU. Me and my buddies think Intel are paying you. How much did Intel pay you to give The Ryzen 1800X your highest gold award? £50,000?’

I dont think it was ever designed as a £500 ‘gaming processor’ Just like the 5960X and 6950X were never £1,000 and £1,500 processors designed to just play games.

The 1800X is clearly targeting the X99 platform, and with a processor half the price of the 5960X and outperforming it, seems they did a good job. Hell it even keeps up with the 6950x which is more than THREE times the price.

Can’t believe so many people are missing the point!

Did you read the results? A Core i7 Skylake processor has half the cores and a fraction of the performance OUTSIDE gaming – look at the 7700K v the 1800x in anything apart from games. If ALL you do is game the 1800X is not for you, and I doubt someone who doesn’t understand the architecture, or even the greater review details in any way would spend £500 on a 1800X so move on and stop trolling. An i3 is all you deserve. Muppet.

What about Guru3D that shows @ 4.1 GHz, the 1800X ‘s voltage is 1.526 V. Anyway, my original comment stands. And I’d rather believe Tom Logan than the users’ comments. Mind you, Tom Logan has full praise for the 1800X.

Nano is very sexy

No your original comment doesn’t stand. you only think it does because you are a Tom Logan fanboy – using 1.526V!! (thats dangerous based on what I have read!) Trust me, if it was ASUS and not AMD, he would have already shagged the processor on youtube for advertising dollars.

I had made my comment before I read Tom Logan’s review, and Tom Logan’s voltage reading is Lower Kitguru’s voltage reading, his CPU-Z voltage is 1.384 V at 4091.71 MHz. 1.526 V is the one at Guru3D, head over there and looking for it yourself. And if 1.526 V is dangerous, the more my original comment stands.

You seem to want your comments to stand, like its a pride thing. Yet you are just putting faith in other people. Why don’t you buy one, try it out for yourself and see who is right? You don’t believe anything apart from what Tom says so I would suggest you go over there and give him some lovely compliments on his work (which you can’t guarantee is right, but ‘want’ it to be right).

How about you put all the bias in your head to the side and do a proper job yourself. I can’t really put faith in Logan’s Or HIlberts reviews and people testing power consumption on any site which is actually falling outside AMD’s recommended voltage guidance settings (which as far as I know from hearing one site comment on AMD reviewers guide is 1.45v). Sure AMD could be aiming for safe voltage, but until some processors potentially start failing due to voltage settings above their spec, people won’t know. Also when factoring in possible silicon degradation over time, who knows?). I think the 1800X power consumption looks pretty good – especially when is close to the 5960x and 6950X which are more than double and three times the price. Its certainly no bulldozer.

Power consumption (therefore heat) depends on the voltage AND current (Power = Voltage multiplier by Current, in a simple form. Obviously there are other elements such as ‘copper’ losses for power too.). A higher voltage operating at a significantly lower current may result in lower power consumption than a lower voltage operating at higher current – it depends on the numbers for both, not just voltage.

This is potentially what is happening with the Intel voltage vs AMD voltage comparison. The power draw figures in the review show that lower voltage on some Intel chips (vs AMD) can still result in higher power draw (5960X OC @1.3V, 6950X OC @ 1.275V consume more power than Ryzen 7 1800X OC @ 1.44V).

Your 4.1GHz @ 1.2V 6800K is a pretty good OC indeed. That’ll draw less power than the 4.2GHz 1.275V 6800K OC data in the power charts, but it’s also slower than a stock Ryzen 7 1800X and 5960X and 6950X in multi-threaded applications due to fewer cores. Heat and power consumption certainly matter, as does performance in the workloads being put on the processor.

Now tell me, which one will produce more heat, 1.199V or 1.45V? And if you don’t believe what Guru3D’s CPU-Z screenshot and what this review says, that review said why bother reading at all.

Bother with what? Who said I ever read Guru 3D? I don’t. You know my views on 0C3D.

Problem is your opening comment is also idiotic – so I don’t think you deserve a really detailed comment. You say ‘Sorry, my 6800K, 4.1 GHz @1.199 V, 100% stable’. So what? its a 6 core CPU, not 8 core. It gets absolutely CREAMED by the 1800X in anything outside gaming. Ask Tom, give him a call – see if he agrees. Hilbert might even agree too, between banning people on his forums for saying anything they say that he doesn’t like.

You are focusing on about the only thing that you think will help discredit AMD’s new release because you know you own a turkey of a processor now. Just look at the performance – and when you come back and look at that 1.199V reading just pretend you are getting anything close to the same performance the X1800 would give you. Yeah, I agree, it sucks when new, better models come out and you realise what you own was too expensive, and now is much too slow for what you paid for it. Its just life Hossein.

And my 4.2GHz is at 1.251V. What I was trying to say was that the 1800X didn’t actually destroy the 6800K. Of it certainly outperforms the 6800K.

I’m not sure who is the idiot here. If you read my comment carefully, you’d know that my contention was that the 1800X didn’t destroy the 6800K, outperforming, yes, but not destroying.

And you didn’t answer my question as which one will produce the less heat, 1.199 V or 1.45 V?

Not sure what review you have been reading, but if you read ONE that shows anything outside gaming where your 6800k is not getting absolutely ARSEMAULED by the X1800 then you need to pick your sources better. Perhaps the Cartoon Network do Tech reviews now for kiddies?

Seriously though, all the intel fanboys are up in arms today as they realised their fortunes spent for X99 and a workstation style CPU was wasted. So at least you know its not just you that is seriously pissed off today.

The crying yourself to sleep will only last a week, the pain eases soon.

What are u smoking, mate? Cheers:)

Smoking a big fat cigar in celebration! very happy Intel have a competitor now and that arsehats who have been willing to pay £1600 for a 6950X CPU finally can realise that Intel have been ripping them off for years.

Competition is good for everyone. Although I think that should be appended to ‘competition is good for anyone with a brain’.

Honestly though, the 6800k is a pretty good deal compared to the 6950X. Most 6950X owners got ripped off by around £1000 over price. You only paid about £250 more than it should be sold for. Consider yourself lucky.

That was already refuted by the very first reply you got!

The one where he said ‘test our cpu at 4k so that way the test is gpu bound’ LOL. Are you saying you don’t understand how benchmarking works either?

NO I never said don’t test at 1080P. I want people to understand how to apply a review like this. Naminly that in the exception of a tiny,tiny minority use case, Ryzen will not be behind in gaming for real world use. Even at 1080P with a gtx 1080 in most cases it is still above 100fps anyway so again for real world gaming, it makes NO difference. These two important points are being ignored by most readers and reviewers alike. Also I never said anything critical of Kitguru who actually tested in 4K nor siad that anyone was being paid. All I pointed out was the difference disappears even at 1440P since they didn’t’ test it and said reviewers who only tested in 1080P were doing a disservice and they are. You really like to straw man dont’ you? Anyway since Kitguru tested in 4K it doesn’t apply to them obviously. Did you not read their review or are you just lying about what I said to prop up your non point? But the majority tested at 1080P with a Titan or GTX 1080 and then started painting the narrative that Ryzen is behind in gaming while never even bothering to mention that for anyone who plays at 1440P or above or with a weaker GPU, the far and away majority of gamers, the difference will be a few fps to none between all the cpus.

Well unless you game at 1080p and like very very high frame rates to match that high refresh screen which can now hit 240hz. I mean its a small audience, but its still an audience, or ASUS wouldn’t have released the PG258Q panel to target serious gamers who want any kind of edge over opponents online. I would say Including those results isn’t so much a disservice but a ‘service to readers who think differently to Josh and want constant 144fps+ at 1080p’. The more results the merrier I say, but after looking at the KitGuru review it does look like Luke was probably testing for several weeks before this launch.

In the end I think you made it sort of clear what you were trying to say all along, but fuck me with a wet lettuce and barbed wire, it took you some fucking time getting there.

First anytime you over 60fps a high refresh rate display is useful not just for the max fps possible. Many people use these monitors at 1440P in conjunction with Freesync/Gsync to eliminate screen tearing in the 60-100 fps range.

Second n most reviews and in most games while behind Ryzen still gets over 100fps which again will take full advantage of a high refresh monitor and frankly means the differences will be unnoticeable for most people while playing a game. it’s not like 100-120fps or even 80fps is a bad experience. Even when it is the goal and even with a 7700k staying right at the monitors refresh rate at all times is near impossible anyway. 240hz is such a fringe thing it’s not even worth commenting on.

Third. Yes 1080P is most popular but the fact is for the vast majority it is because they own low end-midreange gpus. People using a gtx 1080 or Titan as some reviews have used to paly at 1080P in order to get the highest fps possible are a small minority of the already small minority of people who own such cards.

Fourth when did I say you didn’t show 4K? When was I critical of your review at all? When did I say you should leave out 1080P results?. Oh that’s right I didn’t say any of that and wasn’t critical of you . 1 I say only showing 1080P results is a disservice. 2 you showed 4K results as well. So 3 my statement doesn’t apply to you does it?

Yes time is a concern but all it takes is one disclaimer sentence saying that at resolutions above 1080P or with lower end gpus the gpu bottle neck will mean the fps will equalize among the tested processors. Guru3D managed it and to even go the extra mile and show some 1440P results. YOU even showed 4K. So unless you have a time machine you’re keeping under your hat, it’s possible despite time constraints. In fact I think you deserve a lot of credit for being one of the few who actually showed results at higher resolutions.

I think the problem here is people are missing my point. I am trying to bring much need context and rationality back to this discussion. People to include many reviewers,(note I didn’t say you were one of them) look at what number is higher without considering the real world effect it will have which is often little to none, or if the test even applies to how they use their computer. Again which in this case it wont unless you are using a 1080 or titan or MAYBE 1070 at 1080 or below resolution AND want get as many fps above 100 as you can regardless of practical impact on your gaming experience. Which, as I’m sure we both know, that is not what the vast majority do.

All I wanted to do was point out that the way reviewers were conducted meant that while they showed what the CPU could do when the GPU wasn’t taxed, they didn’t apply to the vast majority of gamers. I also wanted to make sure people knew that even at 1440P the difference goes away for the most part. You only showed 4K so I wanted to make sure people didn’t get the impression it was only at 4K that the gap closed.

Again My goal is proper context. In this case that being actually playing game instead of just looking at numbers and declaring a winner. People are saying Ryzen is bad for gaming when that is simply not true for the vast majority of gamers.

Well I had to be verbose because the clear and concise version you, and others, inexplicably couldn’t comprehend to save your life/lives.

Thanks for the reply but you have missed my point entirely and seem to erroneously think I was being critical of your review. Read my other replies below to sort that out. SMH. I also know why you test the way you do so I’ll skip over kindergarten stuff and tire to be more concise and just hope that doesn’t lead to ore misunderstanding again SMH.

My point was the vast, vast majority are always going to be limited by their gpu making the difference between the cpus academic. So while a review like this is good to show the technical progress of a CPU, people need to understand it will not reflect the real world gaming experience for all but a small minority.

you are an idiot .

1: gaming is the biggest market and thats where this is aimed

2: kabby and skylake can both hit 4.8ghz on a majority of models

3: intel has gotten 25% cheaper all across

i3 ? my 4790k running at 4.9 ghz is enough for all my gaming needs , if you want to talk about mini workstation , sure ryzen is great.

you amdfangirls get butt hurt too quick

I have at theory that there could be an issue with the current NVIDIA GPU driver. Almost every review tested with an NVIDIA GPU. It’s possible that AMD’s GPU driver is better optimized for it’s new CPU.

broadwell. e 32 gb vs ryzen 16 gb

Source for the ETA?

I have a different theory. The difference between being able to play a game or not by choosing the ryzen over intel’s midrange i5 or i7 doesn’t exist. Yes, you get lower FPS by going with ryzen on current, unoptimized titles, but if you think about it, you get a better and more powerful cpu that’s more future-proofed than intel’s quad core counterparts.

If i were to build a pc now, i’d actually trade in those fps for a better overall product.

You’re an idiot yourself. The 8c/16t ryzen CPUs were not designed with gaming in mind, they were designed for productivity or enthusiast maket at an affordable price. Their high end 1800x blows almost anything intel has to offer in that segment at half or even 1 3rd the price.

Not everyone games, many people are content creators, just because you play CS GO all day doesn’t mean everyone does so.

Besides, you’d be much more future-proofed with a ryzen rather than your overclocked 4c/8t skylake/kabylake chip.

Intel stupid fan at its best, you’re the perfect example, failing to understand the article and the targeted market of a given chip.

If you game, and you game now and you’re also using the best gpu there is, yeah, go intel, as long as you play on 100hz+ panels.

If you on the other hand produce content or work with your pc rather than simply playing a game, or if you play a game on a midrange GPU or the entry level of high-end gpu market, then going amd ryzen will benefit you more, games will offer virtually the same performance while encoding and any other high-threaded work will rip your intel’s 4c/8t apart.

Of course, to be able to do this math, you need a brain so you can think about what you pay and what you get in return, if you’re simply a fanboy, then there’s no cure, just buy intel and be happy about it.

At this point, I think it’s an optimization issue, but not from games themselves, but the graphics drivers. If it was from the games, not every one of them would follow the same pattern (much in the same way of all the rest of the software).

It’s not a matter of PCI-E/GPU performance (since making it the bottleneck with resolutions/settings remove the difference), it’s not a problem with threads/clocks (since it trails behind in games where 6900K outperforms 7700K), the SMT thing doesn’t explain most of it (disabling it helps a bit here and there, but doesn’t change the whole picture a lot)…

The elephant in the room is the graphics drivers, the one super-micro-optimized (quite possibly with the overwhelmingly dominant Intel architecture in mind) piece of software that each and every one of them use under the hood.

Every single test I’ve seen have been with Nvidia cards/drivers, testing with Radeon might shed some light on the issue changing that variable (especially because they could have had access to Ryzen sooner, and even if not, they have always been less super-optimized than Nvidia’s to begin with)

http://www.legitreviews.com/amd-ryzen-7-1800x-1700x-and-1700-processor-review_191753/15

So salty your eyes aren’t working properly!! LEARN TO READ M8 AH STATE OF YOU!!

OK here is what AMD’s John Taylor told PCWorld:

“CPU

benchmarking deficits to the competition in certain games at 1080p

resolution can be attributed to the development and optimization of the

game uniquely to Intel platforms—until now. Even without optimizations

in place, Ryzen delivers high, smooth frame rates on all ‘CPU-bound’

games, as well as overall smooth frame rates and great experiences in

GPU-bound gaming and VR. With developers taking advantage of Ryzen

architecture and the extra cores and threads, we expect benchmarks to

only get better, and enable Ryzen to excel at next-generation gaming

experiences as well. Game performance will be optimized for Ryzen and

continue to improve from at-launch frame rate scores.”

I would too.

The problem is, the claim for current highest performing gaming CPU still resides with the 7700K, the 6700K, and maybe the 6900K depending on the game. So while I would suggest that anyone who is upgrading seriously consider Ryzen’s future precedence, there is no denying that if you want the best performance now in all games at 1080p, Intel is the one that offers that. Even the 7600K beats the 1800X at a significantly lower price. That’s Intel’s niche. AMD’s niche is again more to do with the fine wine theory and overall value for money, something they are now synonymous with.

I have updated some of the gaming charts to include test data for the i3-7350K at stock and overclocked now that it has been obtained and validated.

Well to be fair if you are gaming at 1080p what speed does your monitor even run at? Because lets face it most monitors top out at 60hz so anything over 60FPS is just pointless. Also if you don’t have the money to get a higher resolution monitor then buying a premium graphics card or the 7700k are also pointless. An i3 and a 1060/480 will give you the frames you need for 1080p gaming

Just looking at these benchmarks. The performance is mostly well below that of an i5.

However, on other websites it shows the 1800x beating the stock i5’s in F1 2016, Shadow Warrior 2, Watchdogs 2, Anno 2205, Dishonored 2, and just sub par equal in Doom Vulkan.

I’m not angry because that particular website is missing some of the ones where AMD is losing like Gears of War 4 etc. Anyhow, I understand they can’t benchmark ‘everything’. That is why there are so many different review sites. The other website was not cherry picking good benchies as they had the Tomb Raider loser in there.

The goods to take from this website is the difference between an OC’d i5 and 1800x. The ones that claim AMD is marginally beating the i5’s dont include the OC of the i5. Seeing all these signifigant losers changes the viewpoint of performance… but then when I look at winners… I have dual personalities… YESS… NO….

So how is this going to be solved. I’m just guessing the 1600x will perform similar to 1800x (same clock speeds). So its will be a battle of price and performance ratio.

Well a lot of us Intel Fanboys owe an apology I guess. I am sorry, I was wrong. Zen is actually pretty good!

Oh wait, I’m a gamer, I don’t care about AMD still, cause they lag on that! OH HECK YEAH! Hope you go bankrupt AMD, your products are probably made in asian sweat shops in terrible conditions and low wages WHOOOO!!! *spins shirt like muscle man*

I DOUBTED AMD!!!!

Can you blame me? Buttdozer and Poopscavator really stunk, and it just seemed like more cheap talk from AMD yet again when they talked about ZEN.

SO enjoy your elation and temporary victory AMD nerds. My dark side boys are hard at work over at intel on their DEATH STAR! You rebels are gonna pay DEARLY for this travesty! And by pay, I mean, not pay as much as us intel fans, cause we are overcharged at the moment, but STILL! YOU WILL PAY! *shakes finger at you*

Intel is working on super secret chips that run at 100,000 ghz. I am telling you! Watch out! We’ll be back! There can ONLY BE ONE in the PC MASTER RACE! In the meantime I WILL let the door hit me on the ass on the way out. Have a nice day.

But 7700K is cheaper than 1700….

sucks at multi-threading? have you even watched any reviews? even Gamers Nexus would give the multi-threading award to the 1800x vs the 6900k.

As much as I’m rooting for AMD, I don’t think that’s the proper way to test a CPU.

When testing a cpu, they need to remove any bottlenecks from the gpu as much as possible. Hence, the titans or 1080’s.

Also, I think you misunderstood how graphics are rendered. The gpu alone cannot “decide” what’s the fps is. There’s this thing called “Draw Calls”. You can read it up here:

https://medium.com/@toncijukic/draw-calls-in-a-nutshell-597330a85381

This is just the first week of Ryzen out in the wild. Like what AMD has said, it still needs optimizations like any other new architecture.

Yeah, that’s another point altogether. The market of guys who buy 7700K’s and 1080’s and still game at 1080p are not massive, but they are still alive and kicking. That’s what I mean when I say that is Intel’s niche. There is a large enough market for Intel there, something AMD’s CPU’s are not as competitive in. Also you have to consider the negative outlook people now have of Ryzen when it comes to 1080p gaming. A less savvy consumer might misconstrue that with overall performance. And then you’ll have Intel fanboys using Ryzen 1080p performance as fuel to further their agenda. That negativity will hurt sales even if it’s unjustified.

I know what draw calls are. SMH. I was using terms to make it easy to understand since people seem to be willfully misunderstanding what I am saying. Like you for example. So I’ll just try again.

My point is for the vast majority they will have about the same fps no matter what they use cpu wise when we’re taking Ryzen,Braoadwell E and Sky/Kabby lake 4 cores. This is because their gpu is the bottle neck. The problem with only testing with a Titan or GTX 1080 at 1080P is that you than have to explain that it doesn’t represent real world gaming performance if you use a higher resolution or lower end gpu and is only done to show the technical capability of the processor. But almost no reviewers did that.

You don’t seem to grasp the concept of applying test data to real world use cases. The most common of which is 1080P with like a 1060 or 1050 Ti or older gpus. Even among owners of 1080s it is far more common to be playing at 1440P or 4K where the CPU is making little difference . When you consider the way the vast majority of people actually play games, you can see that even as it is now without optimizations and launch day bug fixes Ryzen is more than enough for gaming and will before at least as long as the 7700K but probably longer due to it’s cores.

I’m sorry but i would say that market is in fact non existent, if you have a 1080 and are playing on a 1080p monitor then you are an idiot. Even if you are the ryzen still deliver enough frames for you not to notice a difference in performance. All these Intel fanboys going on and on about 1080p performance would not be able to tell the 2 systems apart in games side by side because the difference between 90FPS and 150FPS is negligible at best. Also you did not respond to the fact that most monitors cannot refresh fast enough to even display more than 60FPS making anymore just bragging rights

Different microarchitecture. 3d software and benchmarks can be highly paralelized while games are serialized, and that ends up following amdahl’s law and throwing more cores at it wont necessairly give more performance, it might even make performance get worse by bottlenecking the main game thread.

The major problem confusing people is exactly this one, they think that because Ryzen does so great on 3d software or cinebench, that it should do the same in gaming, except game if an entirely different beast and can’t be compared directly. Unfortunately isnt’t in AMD’s interest to explain this, they prefer to keep things confusing since there are so many reviews with GPU bottlenecks that make Ryzen close in to i7’s in performance, and AMD fans themselves got their pitchforks agains reviews they dont like.

Its not just a matter of quality of programming, that does have an impect however, the major issue is how game design works. Even Ashes of singularity, the techdemo for DX12 and multithreading for games shows that.

Also, AMD has given the same excuses in the past for visheras and prior, blaming game optimizations, windows scheduling, etc. And after some time passed it was shown nothing changed. I dont expect them to change once again. Its an awesome CPU for work, “bad” choice for gaming.

Different microarchitecture, cant compare that easily with Intel cpus, they dont work the same way. Also, 3d software and benchmarks can be highly paralelized while games are serialized, and that ends up following amdahl’s law and throwing more cores at it wont necessairly give more performance, it might even make performance get worse by bottlenecking the main game thread.

The major problem confusing people is exactly this one, they think that because Ryzen does so great on 3d software or cinebench, that it should do the same in gaming, except game are an entirely different beast and can’t be compared directly. Unfortunately isnt’t in AMD’s interest to explain this, they prefer to keep things confusing since there are so many reviews with GPU bottlenecks that make Ryzen close in to i7’s in performance, and AMD fans themselves got their pitchforks agains reviews they dont like.

Its not just a matter of quality of programming, that does have an impact however, but the major issue is how game design works. Even Ashes of singularity, the techdemo for DX12 and multithreading for games shows that.

Also, AMD has given the same excuses in the past for visheras and prior, blaming game optimizations, windows scheduling, etc. And after some time passed it was shown nothing changed. I dont expect them to change once again. Its an awesome CPU for work, “bad” choice for gaming.

There are a lot of idiots out there. 😉

In all seriousness, some people will buy new hardware and keep playing with the same monitor because they want every setting at the max (without exception) and want it at very high frame rates. 60 FPS is not enough for some folks. They want 144 FPS in GTA V at 1080p with every setting cranked. You need more than a GTX 1080 to achieve that consistently in GTA V even at 1080p. There are always people at the extremes. The reason why they’re not out in public might be because they don’t want to be ridiculed for the way they spend their money and game on their systems. Maybe they’re just happy to stay in the background doing their thing.

A lot of people are still gaming on 60Hz 1080p monitors, yeah, but those would not likely be buying a GTX 1080. They’re buying RX 470’s and the like. In that case a Ryzen CPU is no worse than an Intel i5 or i7. But that then falls into the category of who is buying a £330 minimum CPU with a £150 monitor and GPU?

1440p and beyond was a good reason to start gaming on a PC again.

I assumed you have a vague idea of what frame rendering is because you said “for 99% of people it will be the GPU deciding the FPS not the CPU” which is not the case.

Alright, look. Fact of the matter is they’re testing the high-end CPU lineup here. If they put a mid-range or low-end GPU there then that will be a GPU test as it’s almost hard for the 1800x to bottleneck low-end to mid-range cards. Sure it may be a real-world scenario but they need to consider which of the components are actually being tested on a given scenario.

I think what you’re looking for is a gameplay video on youtube. Try looking for gameplay videos with the specs you’d like to see.

That, or you just need to wait for the R5 and the R3 lineup. Test data on those would apply more to real-world use cases with low-end to mid-range GPU.

You can also wait for GPU benchmarks (if a new GPU line came out) to see benchmarks with high-end CPUs.

How is it more future proof when it is slower and gaming then virtually any 4c 8t i7 since sandy bridge at gaming? You can’t say it is more future proof when intel cpus from years ago beat it and don’t bottleneck the gpu.

You’re not entirely right nor wrong yes games are usually optimised for few cores and yes AMD said that, but if you pay close attention buldozer, vishera, bla bla bla( theres just too many bad designs lol), has never caught up with intel even in synthetic nor 3D apps, etc and we know why it wasn’t delivering, (cuz cores shared caches and other resources) this problem we know is not present in ryzen and as you can see the difference is little, so yes I do believe optimisations will put ryzen to compete in gaming with the 6900k but not with the 7700k not now at least. Whats more is as you know CPU Technology has stagnated theres not much room for single core improvement, yet games keeps getting more and more demanding each year on the CPU so yes, there not programmed to use 16 threads today but they will in 1 or 2 years since there will be no alternative for it! And low level APIs are already here to make this possible.

I can see why game developers didn’t bothered optimising games for lots of cores: almost no gamer had a CPU with more than 4 cores and they would need to train people for this, the only processors with high core counts were from AMD and they were garbage so why bother? Now they NEED to bother cuz thats the only source of more compute power and we do have 8 cores on mainstream. From both AMD and Intel (I’m talking about hyperthreaded 4 cores intel CPUs like the 7700k)

AMD basically released a CPU that isn’t compatible with the Windows 10 scheduler, this is why it gets better performance when SMT is disabled. “The scheduler does not appropriately recognize Ryzen’s cache size and cannot distinguish physical cores from SMT threads. This in turn is causing it to often incorrectly schedule tasks in the much slower — approximately 4 times slower — SMT threads rather than primary physical core threads”

” Whats more is as you know CPU Technology has stagnated theres not much room for single core improvement, yet games keeps getting more and more demanding each year on the CPU so yes, there not programmed to use 16 threads today but they will in 1 or 2 years since there will be no alternative for it! And low level APIs are already here to make this possible. ”

Go look into Amdahl’s Law. More cores dont have a lot of gains past a certain point, games are heavily serialized and sometimes more cores can endup having worse performance. Its not my opinion, its a fact of on how game design works.

I know about the scheduler problems.

You’re talking about multi-threading on games? Most games don’t even fully utilize all threads right now. A good multi-threaded tests are from the productivity softwares such as Cinebench, Handbrake, and the likes which actually uses ALL CORES/THREADS. And guess what? That’s AMD’s territory now.

Even if a game were to utilize all threads such as DX12 titles or Vulkan titles, have you even looked on graphs other than max or average fps?

But i7-7700K can’t compile / transcode / render as fast as 1700 and price difference between 7700K and 1700 is not equal to their multithreaded performance so 1700 better value.

I do use PC for work if I want game, then

1: purchase console,

2: use PC with highly clocked 2-4 core CPU + maybe OC it and pair with equivalent GPU.

Have you understood what I said? Or maybe you don’t understand Amdhal’s law, lets start with it: Amdhal’s law doesn’t say you can’t always get more performance by parallelizing it says that if you’re trying to adapt an existing program to run in parallel IF any part of the program cannot run in parallel than you’ll be limited in by that part in the amount of speedup you can get but guess what, if you’ve started your program from scratch thinking in parallel computing you just wouldn’t have any part of it unable to run in parallel, you see if you just couldn’t make a program run 100% in parallel then GPUs wouldn’t be a thing… I mean GPUs have thousands pf cores and see how much better at processing they are, I’ve subscribed to Nvidia’s Iray beta when it came out and gos how that thing works… A scene that would take hours to render in CPU renders in just a few minutes in GPU… And that brings me to what I’ve said, I’ve said that game developers won’t have any place else to get the performance their games need so they’ll adapt to use more cores.

Game design isnt paralel. Period. Tons of threads are created for tons of stuff just like you said, and pretty much all of them have to go through the main game thread in 1 cpu core, ands thats the bottleneck on Amdahl’s law.

Jesus Josh, just shut up. you are waffling on so much and are contradicting yourself on earlier posts – KitGuru got it right and you know it – just because Luke said he didn’t have time to test 1440p is hardly an issue – your main point was ‘OMG its a great CPU, at higher resolutions it doesn’t matter which CPU you use, you could in fact even use a potato and it would be ok’ – and KitGuru tested 4k.

Such a smug, self involved egotistical guy. I love how you quote ‘Proper context’. Id love to see you trying anything close to the quality of this review. And STOP quoting GURU3D – Hilbert is a complete muffler – his grasp of English is so bad it pains me to read his reviews. Especially as he tries to be funny all the time and comes across as the comic you go to watch in Butlins and end up wanting to pound him with tomatoes.

sassafras15 – its best you try to stop understanding Josh’s logic. He doesn’t work on the basis of Logic – he wants sites to ignore 1080p testing and focus on situations where the publications are just testing the graphics card. The points that 1080p is the most popular resolution and many gamers here (as ive read in these discussions many times) – use 1920×1080 or 2560×1080 monitors at high refresh for gaming isn’t important to him.

So not only does he want bad testing which is basically showing GPU performance not CPU performance but he doesn’t care about the most popular resolution for gamers – he just wants Ryzen to look completely perfect and works his logic around that.

I want RYZEN to do well, as my posts in here show, but all this fanboy nonsense just does my head in.

No, No No. A lot of KitGuru readers (as their polls show) are gaming at 1080p with 120hz, 144hz or even 240hz panels. You can’t do that with a 1060 or 1050ti – unless you want to run at 60fps. Do some research for god sake!

Please stop delving into subject matter in which you obviously have no idea what you’re talking about.

Wow. So in other words, I”m right and you have no argument to make. So you’re just going to spew personal attacks. Got it. No need to further reply. You’ve already embarrassed yourself thoroughly. The sad part is you don’t even know it.

Quote:You’re an idiot yourself. The 8c/16t ryzen CPUs were not designed with

gaming in mind, they were designed for productivity or enthusiast maket

at an affordable price.

I think you are the Idiot after all! Watch this carefully and listen! http://www.amd.com/en/ryzen

Today is a really big day for AMD and actually is a really big day for ALL PC Gamers and content creators.

Well..it turns out that is not really big day for Gamer’s, and I doubt that is a big day for content creators.

Quote:Did you read the results? If ALL you do is game the 1800X is not for you, and I doubt someone who doesn’t understand the architecture,or even the greater review detail.

I think you haven’t watch official video from AMD regarding Rayzen 1800x… they even claimed that it beat Intel i7 6900k which is a lie!

http://www.amd.com/en/ryzen

Quote: If you read my comment carefully, you’d know that my contention was

that the 1800X didn’t destroy the 6800K, outperforming, yes, but not

destroying.

I think there is a lot’s of confusion everywhere in the reviews regarding Ryzen 1800X and i7 6800K, i7-6950X, i7-7700K,i7-6700K.

There is a video at AMD official site regarding Ryzen 1800X . http://www.amd.com/en/ryzen

First of all..Ryzen 1800X has never been compared to i7 6800K, i7-6950X, i7-7700K,i7-6700K by AMD so no point to even me mention other CPU!

It was compared to Intel I7 6900k she said that: Our competitors set price $1000 and AND Ryzen $499 and that the Ryzen 1800x is the fastest 8 core possessor on the market,which is not TRUE!

Also,there is an update at the end of the video which is worth to read,quite interesting statements.

p.s However and nfortunately I7 6900K still shafts Ryzen 1800x in every WAY!

Yeah..Lol

Ryzen 1800x 3.6GHz at base speed and brings boost way up to 4.0GHz WOW man!Holy cow..way up to 4.0GHz that’s sensational boost speed.LoL

Quote:Seriously though, all the intel fanboys are up in arms today as they

realised their fortunes spent for X99 and a workstation style CPU was

wasted. So at least you know its not just you that is seriously pissed

off today.

Do you actually know release date of X99 Platform you Muppet??

It was released back in 2014..that nearly 3 year ago!

And what you going to offer today?? AMD® X370 platform, no quad-channel memory,PCI express lanes are Gen 2.0 and not Gen 3.0.

24 LANE CPU against 40-LANE CPU

And finally they add M.2 Socket to X370 platform.. WOW man is extraordinary! I had M.2 SOCKET in my old Z97 chipset.

p.s It doesn’t looks like you Smoking a big fat cigar…is more likely a horseshit!

It should be called a Cattlepillar..as there is a plenty of cattle follow it!

to Luke:

Quote:

I have updated some of the gaming charts to include test data for the

i3-7350K at stock and overclocked now that it has been obtained and

validated.

Hi Luke, why there is no I7 6900k in all of your benchmark charts???

As you may be aware that Ryzen 1800x was aimed particularly at Intel I7 6900K according to AMD official video at http://www.amd.com/en/ryzen and according to AMD testing Lab 1800x beat Intel 6900k at half the price!

So are we going to see an update with the I7 6900K in your charts?? That’s would be interesting..

Thanks

Infinite Fabric seems to have a tear

Hi Tony.

We don’t have a 6900K sample and have never worked with one unfortunately. We decided it was best to invest over £1500 in a 6950X for comparison and make do with the 5960X as the 8-core comparison, rather than have no data from Intel’s fastest consumer CPU – the 10-core 6950X.

Hi Luke,

Thanks for your replay.

Unfortunately I haven’t been looking for the investment.

And I’m not talking about 6950X so why you even mentioned it??

All I said it should be I7 6900K in your comparison review against Ryzen 1800x as it was the case!

As far as I remember 5960X 8-core was release back in 2014 if I’m not mistaken..and you compare nearly 3 year old CPU to 2017 New CPU??? Instead of ,as you said investing in I76900k for a fair review..

After all..that’s what AMD was claiming in their official video: http://www.amd.com/en/ryzen

Intel was lying in the past about performance and speed of certain products,AMD has been lying about their products..Envidia been lying as well..etc

And now so called Tech site nearly all over the NET has been misleading or confusing the public as well with THEIR Bogus and Biased reviews.

Really disappointing…

Hi Tony,

We don’t have a 6900K so couldn’t gather its data unfortunately. The 6950X was brought into question because you specifically asked why there is no 6900K data in the tests and the reason for that is because we invested more money on instead getting the fastest consumer CPU on the planet so that we could interpret where Ryzen 7 performance stood in the overall picture. The 5960X was indeed released in 2014 but its performance is similar to the 6900K as they are both 8C16T with BDW-E being a process node shrink rather than an overhaul to the architecture. I would also guess that the 5960X is more popular than the 6900K, given that it was the fastest consumer chip for a long time, but I do not have any usage figures to check if that is indeed the case.

Not sure why a ‘fair review’ is being questioned. You could look at the comparison from a price perspective and the logic that people considering buying a £329-£500 Ryzen 7 aren’t going to spend double/treble the amount on a 6900K. By which logic, the £329-£500 Intel competitors are the most ‘fair’ comparisons.

If you are ‘disappointed’ with the reviews on tech sites ‘nearly all over the net’ then perhaps you should consider purchasing the processors yourself and doing your own testing to suite your own needs. There’ll be no need for claims of bias, collusion, or disappointment when you then find that the results of those tech sites ‘nearly all over the net’ are indeed a true interpretation of the performance numbers.

Bingo!

(notice that a few games score on par with a 6900K, like Mafia3, Doom, Metro, Overwatch) games that are known for solid code and lots of heavy special FX) but those coded for 2 cores (so 10 years ago) are mostly relying on just cache speed and cpu frequency.

Usually companies that just wanna churn out cookie cutter stuff or are pressured by share holders to release half ass betas, either that or they’re just happy being lazy releasing same thing every other year.

Content creators use all of those cores and the results simply rock. In gaming it has fewer frames but a much more stable minimums, which is again great.

With Intel’s mainstream processors, that sell by the truck load to enthusiast PC builders, still limited to four physical cores, many people simply do not see the need to upgrade their ageing piece of silicon. Add in the ~£400 buy price for Intel’s cheapest more-than-four-core enthusiast CPU and it’s easy to see why so many people are holding on so tightly to their ‘good enough’ processor and investing that upgrade budget elsewhere.

Hello, it’s very helpful article, please keep going

How can a chip score so well in benchmarks (both single and multi-core) and then do rather poorly in gaming performance? Is this an issue to do with the quality of how the games are programmed?

All Ryzen CPUs feature an unlocked multiplier that allows them to be overclocked without adjusting the BCLK, using a compatible motherboard chipset.

Well then, this is the End Of The Line!!!

In the Handbrake results it says that the CPU was overclocked but it’s not clear that the Memory was overclocked to the limit.

For example the BioStar X399 MB’s Memory Compatibility Lists show G.Skill Memory that can overclock beyond 4GHz and various Websites report that the Zen lineup benefits greatly from faster Memory – if the Ryzen tests had highly overclocked Memory they could have done better on Handbrake.

PS: Thanks for running these Tests and for the great Articles on this Website.